How often does Google crawl website and how long can you expect to wait for new content to index and appear in the search results?

It’s a common question in the SEO community and although crawl rates and index times can vary based on a number of different factors, the average crawl time can be anywhere from 1-day to 4-weeks.

Google’s algorithm is a program that uses over 200 factors to decide where websites rank amongst others in Search. These factors are pieces of information that Googlebots collect from each site during the process of a ‘Google crawl’ and are taken into consideration when filed in Google’s ‘index’.

We know that the quicker sites are crawled and indexed, the quicker they are served up to users in organic search. So, how can we optimise our sites to encourage more frequent crawling?

The web is like an ever-growing library of information; Google uses web crawlers to discover and find data about each website to have it appropriately filed in the library (index). Once a site has been indexed by Google, it is added to the rankings for all of the words that are featured in the content.

Understanding the crawl rates and index times can help website owners to make better, more informed decisions. While we do not know the exact formula for Google’s algorithm, we do know that improved crawlability and frequency of indexing is closely correlated with improved organic search rankings. This article will discuss the frequency in which websites are crawled, how they are crawled, and how to get pages indexed faster.

Table of Contents

How Does Google Crawl Websites?

Google uses the information on your website to determine where content is relevant and how relevant the content is. The first step of this process is finding out what pages actually exist on the web. While there isn’t a central filing system of all of the web pages online, Google has its own index and is constantly searching for new pages to add to it – this process of uncovering new pages is known as crawling.

Some pages are known to Google because they have been crawled previously. New pages, however, are not as easily acknowledged and will usually be discovered by one of two ways:

- Google following a link from a known page to a new page

- A website owner submitting a list of pages (their sitemap) for Google to crawl

Once a page has been discovered, Google will attempt to understand what the page is about. The content is analysed, images are catalogued, and videos are studied, to get an idea of the intentions of the page and where it is relevant. This process is known as indexing. The collected information is stored in Google’s index – an enormous stored database.

How Often Does Google Crawl Websites?

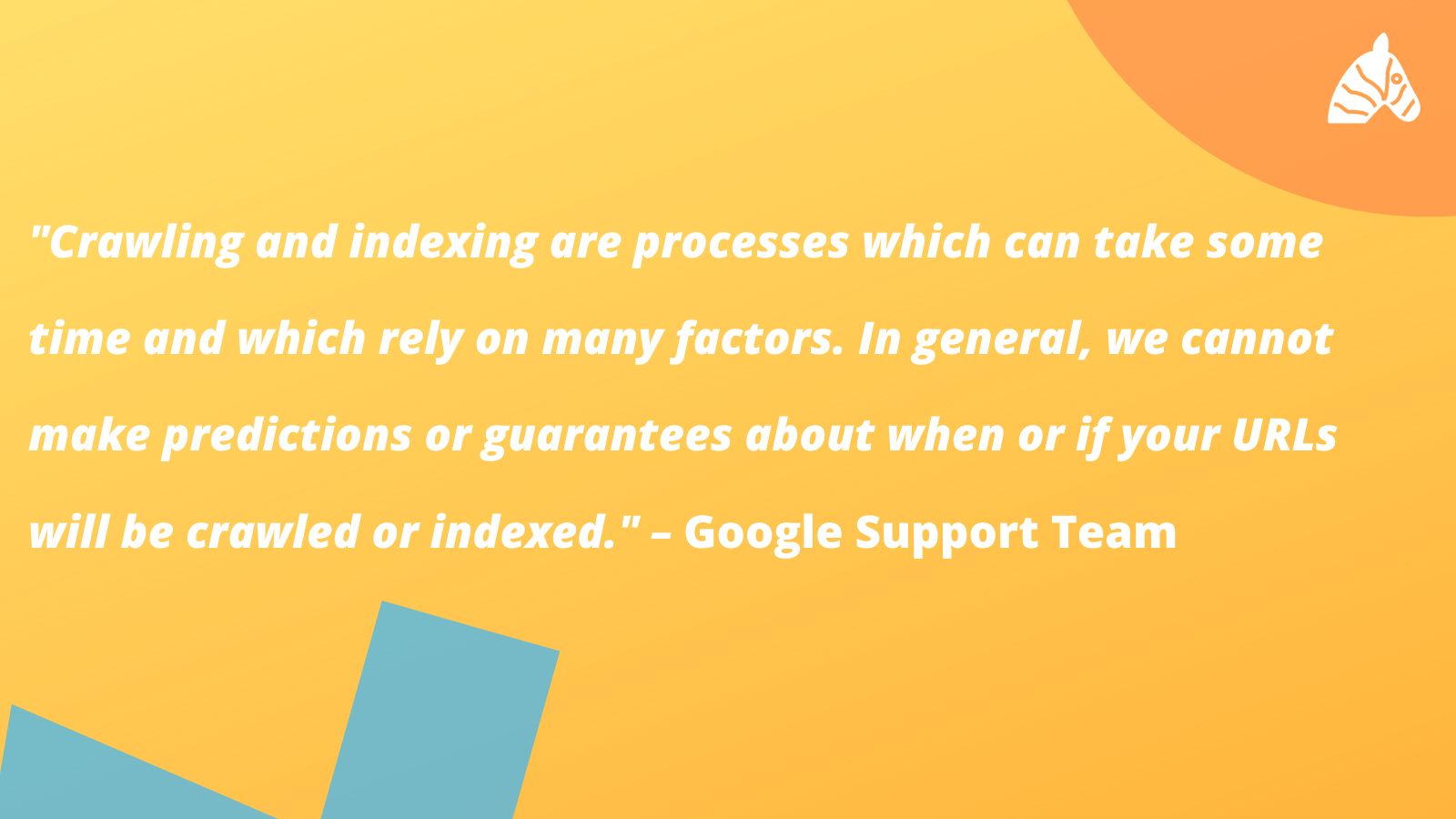

Google crawl rates and index times will vary based on a range of different factors, however, the average crawl time can be anywhere from 1-day to 4-weeks.

From what we can understand, URLs are crawled at different rates. While a page can be crawled and indexed overnight, many websites (particularly small or newly established sites) can wait months to be indexed.

The main factors influencing when and how often a site is crawled are the site’s popularity, the crawlability, and the structure of the site. Older sites with established domain authority, plenty of backlinks, and a solid foundation of quality content are likely to be crawled more frequently than new websites.

How to Get Google to Crawl Your Website

Google’s crawlers come across billions of new pages and sites every day. As you can imagine, it would be virtually impossible to have every page crawled every day – Google needs to use its tools wisely. If a page has errors or usability problems, bots will be less inclined to crawl the site. If bots have trouble finding the information they are looking for, crawls will be performed less frequently on the site. The same applies to the quality of content – if bots are unable to access, read, or find any relevant content, the crawlability and usability of the site is compromised.

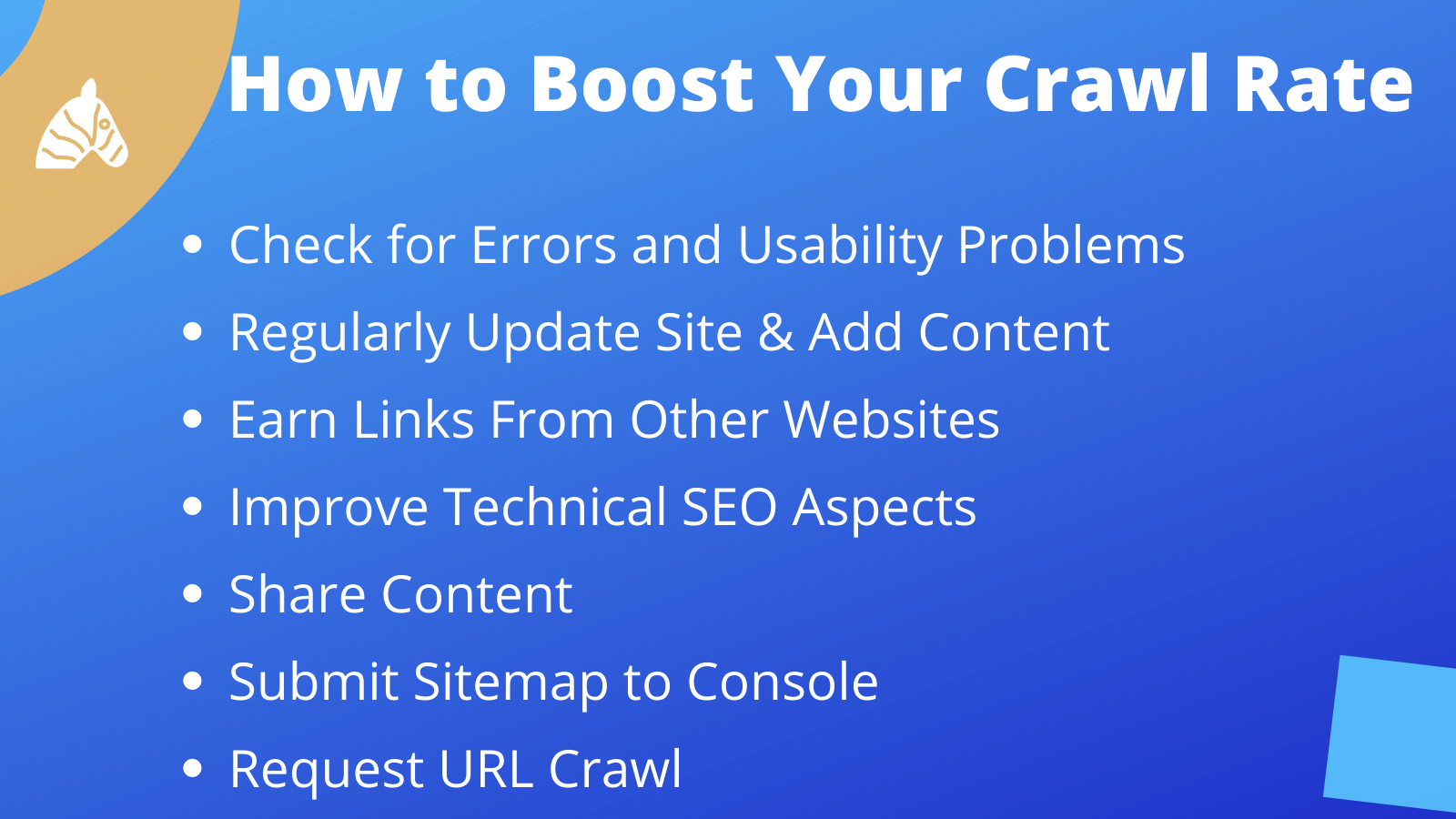

While we cannot follow an exact formula for website indexing, research has shown there are a lot of consistencies that we can work off to boost crawl rate. Making some adjustments to your website can certainly encourage Google to crawl your site more often.

Check for errors and usability problems

Webmasters can use search console to check for any problems in usability or possible server connectivity errors. Fixing those issues will improve the crawlability of the pages. Some of these errors may be fairly straight forward to fix, while others may require intervention from an SEO expert to be able to dissect and understand whether these errors are worth fixing.

Regularly update your website

Regularly updating your website lets Google know that your site is still active and allows the bots to learn more about your site. Having an extensive and well-linked sitemap full of fresh content encourages bots to interact with new pages and crawl them regularly for new information.

Earn Links

Inbound links pointing to your site allows Google to follow the trail from a known site to a new site and collect the new information. An inbound link also tells Google that your site and content holds some authority – backlinks act as a vote of confidence from one site to another. A strady-stream of diverse, incoming-links is one way to get your website on Google’s radar.

Technical SEO

Taking care of the technical aspects of your content improves the crawlability of your site. Make crawling easier for Google by writing clear and concise titles, using short URLs, and enhancing page load speed.

Can I ask Google to Crawl My Website?

Yes – but that doesn’t mean that they will. Webmasters can submit their sitemap to Google Search Console to encourage bots to crawl the map of the site. The sitemap lays out all of the content on the website to help the bots work out which information is most relevant, which pages were last updated, and how regularly content is created.

Can I Submit A Page to Google Crawl?

Webmasters can also request a URL inspection. If you have recently made changes to your site, you can request a URL inspection in Search Console. This will encourage bots to recrawl your page and potentially speed up the process of the discovery of new pages. Once your domain has been claimed, you can request up to 10 individual URL crawls per day.

When Did Google Crawl My Site?

Google’s free Search Console tool gives webmasters the ability to examine and understand how their website performs. Console also gives webmasters the option to view their crawl stats (when Googlebots last visited the site).

To find out when Google last crawled your website, you can input any URL from your site into the search bar at the top of the page. From there, you can view your crawl stats under the “coverage” tab on the left side of the dashboard. Console will provide you with the date and time of the last crawl, as well as which type of bot simulated the crawl.

How Long Does Google Take to Crawl A Site?

While we aren’t able to follow a direct guidebook on how to get a website noticed, crawled, and indexed by Google, there are improvements every webmaster can make to better their chances of getting their website crawled. Google’s main objective is to serve up the best quality information and user experience to searchers – you can help them out by optimising your site structure and regularly providing exceptional content to serve to users first.